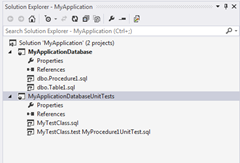

Integrating tsqlt with SQL Server Data Tools

As mentioned in a previous post I've recently been working with a SQL Server unit test framework: tsqlt. I would like to share how I managed to integrate the framework and unit test into my project life-cycle. Unit tests should be treated the same as application code, meaning they should be contained within a project under source control. Being under source control will allow the tests to be maintained and changes tracked when multiple people are helping to write tests. Also having the unit test in a project means there is a deployment mechanism out-of-the-box. The tsqlt framework has a few requirement which are as follows CLR is enabled on the SQL Server The framework and the unit test are contained within the same database of the objects which are being tested The database instance is trustworthy As unit test are required to be contained within the same database as the objects being tested, also due to the way SQL Server Data Tools (SSDT) project validation works, th